The RL Environment Gold Rush

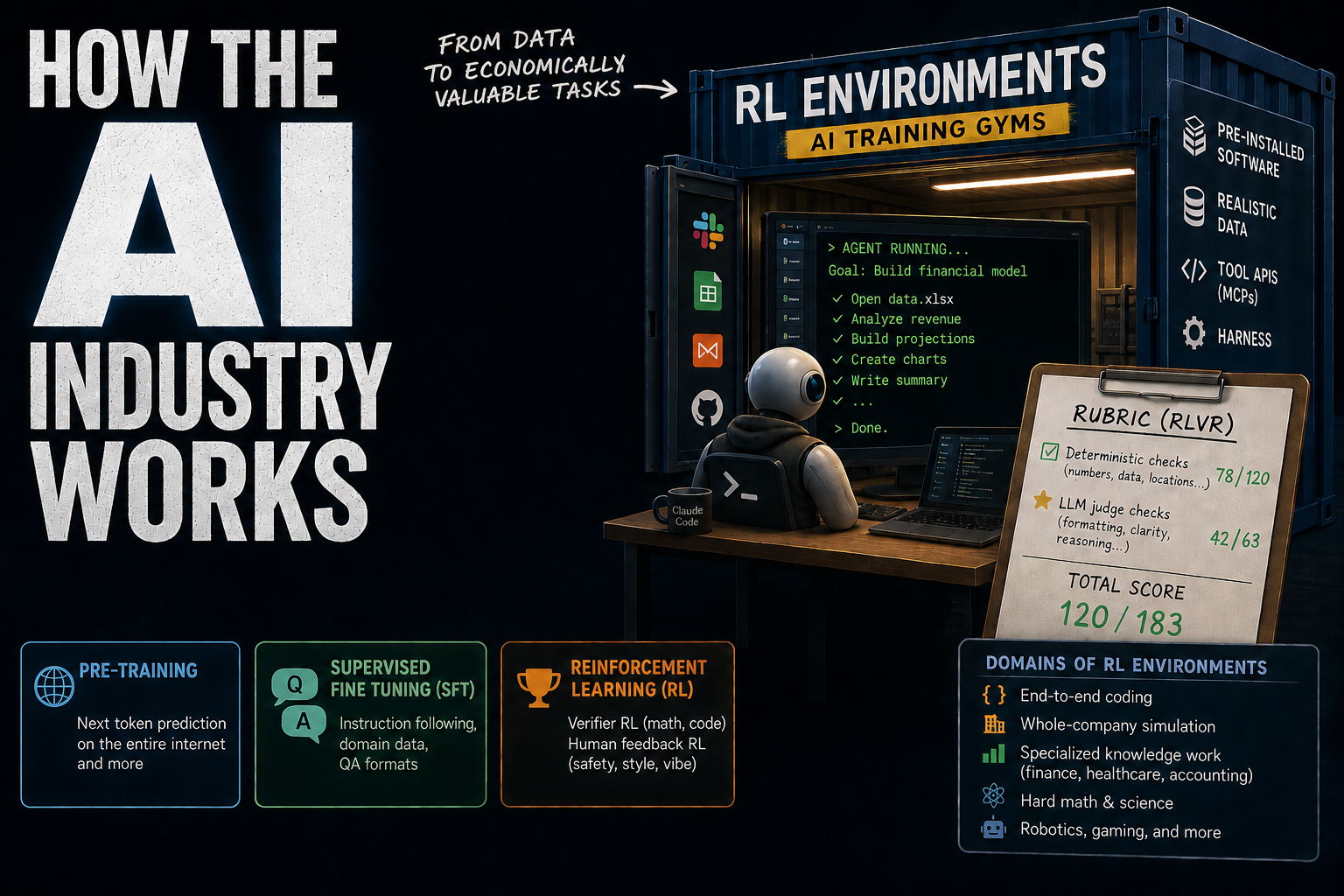

This post is about how frontier models are trained, what RL (Reinforcement Learning) environments are, and what the job actually looks like at the companies creating those environments.

RL environments are the third and latest wave of LLM RL, and the current focus of a lot of investment in AI. We'll cover how we got here and what's driving the shift.

Act I: How models are trained

Most of this Act may be review for you, so skip accordingly.

Overview

The standard AI model today is the Transformer, in particular LLMs. Currently, most model training efforts go toward them. And while the high-level Transformer architecture has evolved (e.g., MoE), it still looks similar to the original one from 2017.

So, what has changed?

One is, obviously, the Transformers have gotten bigger. We scaled compute and data.

Data acquisition for training is a huge industry. Big labs - OpenAI, Anthropic, xAI, Meta, and to some extent Microsoft and Amazon - are spending in the billions on data acquisition.

This has led to new companies whose focus is generating this data, and some are making a lot of money.

What does data look like these days?

Before getting into that, we'll give an overview of the training process.

There are 3 main stages:

- Pre-training.

- SFT, supervised fine-tuning.

- RL (a.k.a. post-training).

Pre-training

For pre-training, we want a dataset as large as possible, so we take anything we can scrape, like the entire corpus of the internet, books, etc.

These datasets can be in the terabytes - trillions of tokens.

You put it in the model, and it learns to predict the next token, as well as the general structure of language.

The pre-trained model is called a base model, which is a literal autocomplete.

It doesn't really know how to answer questions or do things like tool use.

Supervised fine-tuning

Next, the companies need to make these base models useful. That's when supervised fine-tuning comes in.

The idea here is to do the same thing as pretraining, but with specialized data.

For example, if we want our model to be good at finance and science, we can put in specialized finance data and science data. Since this comes after pre-training and training has a recency bias, the model becomes more sensitive to finance and science.

A big part of supervised fine-tuning is instruction following: the question and answer format.

We train the model with a lot of examples of question, answer, question, answer, question, answer sequences. Eventually, the model gets to a point where, when it sees a question, it outputs a response in an answer format.

SFT is called supervised because, following the classic ML taxonomy, every "input" (question) has an explicit, human-provided "label" (answer).

SFT is the same process as pre-training (just different data and scale), so we are not at RL yet.

Reinforcement Learning

After SFT, we get to RL. There are different types depending on what we want the model to get better at.

Two big ones are:

- RLVR (RL with Verifiable Rewards): for the reasoning side - math, coding, objective things.

- RLHF (RL with Human Feedback): for subjective things.

Typically, we do the objective stuff first.

The labs get models to do super well at math and science benchmarks by spending a ton of money buying data from real mathematicians and researchers to post-train the models on.

The amount of compute spent on this kind of reasoning RL has been growing rapidly - sometimes substantial relative to pre-training - which is known as scaling post-training.

And then we have the subjective stuff, like safety and creative writing. What's the model's personality? How does it speak? Is it unbiased? All that soft stuff is at the end.

We'll first talk about RL in general, and then about how it's applied to the different cases.

"Does RL modify the same weights in the model as training and SFT?"

There are 2 options, depending on the goal.

If we want to fundamentally uplift the model in science or some other domain, we bake it into the model.

There's also a technique called LoRA (low rank adapters) where you train an independent set of weights, and then you can optionally add those weights to the model to customize its behavior.

(LoRA trains pairs of smaller matrices which, when multiplied, produce a matrix with the same shape as the ones in the model, and then it's literally element-wise addition.)

So, during RL, you can train only the LoRA weights without touching the base model.

And then you can swap in and out different sets of LoRA weights to get different model personalities and specializations. That's how people do the more cosmetic stuff.

RL Overview

RL is a learning strategy based on doing a bunch of simulations and obtaining rewards.

We can think of the model itself as a "policy" that maps a "state" (the system prompt) to "actions" (the continuations).

Let's say we are trying to answer a math problem. We can run the model 100 times to answer it. That's called 100 rollouts.

Sometimes it gets it right, sometimes wrong. Based on how often it gets it right, we give it a score for how smart it is.

RL is the process by which you take the right responses and upgrade the weights that correspond to those, while downgrading the weights corresponding to the wrong responses.

There are several variants.

DPO

The easiest variant is DPO, direct preference optimization.

Have you used ChatGPT and gotten, "here's response A and response B, which do you like better?"

That's likely for DPO: you have 2 examples (rollouts), you pick one, and DPO increases the weight on the one you like better, and decreases the weight on the one you like worse.

People do this for a lot of things: safety, personality, vibes, aesthetics, and so forth.

The good thing about DPO is that it's an offline training method: the model doesn't need to run at all during training - the preference pair becomes training data directly.

Generating preference pairs at scale is what Scale AI used to do.

DPO has similarities with SFT in that you train the model offline. Online RL is more complicated.

GRPO

The 2nd type of RL, GRPO (Group Relative Policy Optimization), is used for more objective tasks like math and science. It was popularized by DeepSeek (in the DeepSeek-R1 paper).

A good proxy for "model is good at math" is being able to solve questions from math competitions.

We can take a dataset of competition questions, and, for each one, do a couple hundred runs of rollouts.

For GRPO to work, we need the model to get it right at least some times, ideally in a middle band (roughly 20-50%). If it's too hard, there's no positive signal at all; if it's too easy, the signal saturates.

All the AI labs now want data like this: domain-specific tasks - like math problems - where the model gets the task correct in that middle band.

In a typical setup, GRPO might do around 100 rollouts per question and calculate a score called an advantage function for each one.

The advantage function is how much better this rollout is compared to the average.

- If 50% of rollouts are correct, a correct rollout gets a modest positive advantage.

- But if only 10% are correct, a correct run gets a much larger positive advantage.

Based on this advantage function, we backpropagate the weights.

Unlike DPO, GRPO is an online training algorithm - we need to run the model during training time.

These days, the kind of tasks trained with GRPO for coding agents are end-to-end coding tasks. Then there's some grader that determines if the task was completed correctly or not.

The dataset size needed for a model to get good at one narrow skill may be in the 100s to 1000s of high-quality tasks, which is not that big.

For each task, the model does 100 rollouts, and each of these may take 1000 steps (output tokens).

Compared to pre-training and supervised fine-tuning, which take months, RL for one narrow skill may only take a couple of days.

PPO

PPO is the original RLHF algorithm used by OpenAI for ChatGPT. It uses a value model (a critic) to estimate baseline values, then computes advantage from that.

GRPO skips the critic by using the average score as the baseline.

Expert networks

Now, there are services like Mercor which manage expert networks. If a lab wants their model to be good at archaeology, they can go to Mercor to find archaeologists.

The archaeologists are gig workers, from grad students to professionals, and get paid to generate or annotate training data.

Expert networks can cover every niche of human knowledge, and that's how we get models that are pretty good at many distinct domains.

Act II: RL environments in practice

3rd Wave of RL

By this point, the models have already been trained to operate tools, write code, and think agentically.

Increasingly, the labs care about their models being good at what they call economically valuable tasks. Things like finance, accounting, insurance, and healthcare. Most office work.1

Examples of tasks may be:

- doing a financial analysis

- handling a complicated logistics scheduling problem

- managing emails with various stakeholders

- writing a report

So far, software engineering has been the main one.2 These days, models can write code fine. So now the goal is about end-to-end software engineering workflows. SRE, deployment, etc.

We need RL tasks to simulate all that.

Instead of asking experts to recreate tasks with realistic data, we can source the data from real companies, or at least from contractors doing actual work.

This shift toward simulating economically valuable tasks using more realistic data is the third wave of RL.

To put it in context, these are the kinds of data each training stage consumes:

- Pre-training: "Here's all the text we found."

- SFT: "Here's some advanced scientific text and question-answer sequences."

- First RL wave: "Here's some preference data from human labelers." (RLHF, Scale AI, DPO).

- Second RL wave: "Here are questions and answers generated by experts." (RLVR, expert networks, GRPO).

- Third RL wave: "Here are tasks done by real workers." (Agentic RL; still RLVR-style rewards, GRPO).

RL Environments

So, how do we formulate the tasks needed for agentic RL, exactly?

An RL environment (also called RL gyms or AI training gyms) is a sandboxed environment where an agent tries to complete a task.

There are companies that focus on producing these specialized environments.

They are typically a Docker container with:

1. Pre-installed software

Say the task requires Microsoft Word, Slack, and GitHub.

We can't put Microsoft Word or Slack in the container, but we can install open-source variants like LibreOffice and Mattermost. We do this for all software involved in the task.

2. MCPs

In the real world, agents interact with services like Slack via MCPs, so that's what we want them to get good at.

MCPs are basically an API with a description that tells the agent when to call each API. For example, the Slack API covers opening a channel, closing a channel, talking to a person, reading a channel, looking at a person's profile, etc.

In a Docker container, what we can do is build a wrapper around the open-source version of Slack so that, from the model's perspective, it looks exactly the same.

3. Data

Ideally, the data come from a real-world source. That's why big labs and startups alike are going to big companies across different domains and asking them to sell data.

4. A harness

Whenever the model outputs tokens corresponding to a tool call, there needs to be a layer above it pausing the generation, running the corresponding operation, and resuming the agent with the appropriate output. That's the harness. There's a lot more to it, but think "Claude Code". That's what we unleash in the environment, not just the model.

The tasks

After creating the Docker environment, we need tasks for the model to attempt in them.

The tasks are designed by human experts, who specify the goal (which becomes the task's starting prompt).

In each rollout we give the model a maximum number of steps or a timeout limit. The model either hits the limit or says "I'm done."

From there, we have some artifacts inside the environment that the model+harness created. For example, if the goal is to fill out an Excel model for finance, a sheet will be modified.

Eventually, the outcomes need to be plugged into GRPO, so the rollouts need to have a score based on those artifacts.

Each environment can have multiple tasks, but the rollouts always run in isolation; every rollout spins up a Docker container.

Grading

We may ask the expert, "If you did this Excel model well, what would the final sheet look like?"

The expert has to create a rubric. They can put in a mix of objective and subjective aspects.

Then the grader - also called verifier - runs to evaluate that rubric.

- Deterministic checks, like: are the numbers correct in the right places? Did the model mention what we told the model to mention? Did the model find the right data in the right places?3

- LLM judge checks: another LLM evaluates subjective parts of the rubric, giving a score. For example, if the model made a bar chart, is it well formatted?

A lot of the value of rubrics is in encoding tacit expert knowledge. For domains where knowledge isn't formalized - decision trade-offs, what a real scientist's intuitions look like - the rubric is how you bake those intuitions into the training signal.

Implementation-wise, the rubric looks like a JSON file with a list of checks. Deterministic checks contain references to Python functions, and LLM judge checks contain prompts for the grader LLM.

There can be 100-200 checks for a single task, so the models get a detailed score, like 120/183. That's what goes into GRPO.

Because the model is literally trained to the rubric, the rubric has to be really, really good.

After a training run, we can check whether the model improved by running it on a set of test tasks that we held out.

Domains of RL Environments

The big labs are spending a lot of money to have people make these Docker container environments, because that's the path toward automating jobs. So that's what startups are working on.

What are the domains people are targeting these days?

- End-to-end coding - the software development life cycle.

- Whole-company simulation. They take a whole company with all of the company's history of Jira tickets, Confluence, emails, Slack messages, and create tasks based on realistic work that has been done historically in this company. Sometimes, people get this data from failed startups, where it no longer serves any other purpose.

- Specialized knowledge work: domains like finance, accounting, healthcare. They will hire people to generate these Docker environments.

- Hard math and science: material science, biology, physics, and computer science itself, including AI research itself.

Those are the domains that people are mostly working with when we're talking about RL environments.

There's also robotics (a burgeoning field) and things like gaming.

RL Environment Recap

To recap, this is how RL environments work:

- Start with a model that already has baseline agentic capabilities (after pre-training, SFT, and earlier post-training).

- Put it in many specialized Docker environments with realistic tasks.

- Score each rollout with a detailed human-authored rubric.

- Feed those scores into GRPO, update the model, and repeat.

- Run this loop for a couple of days, then evaluate on held-out tasks.

- Repeat per domain/task family you want the model to improve on.

What this means if you're an engineer

- RL environments are very lucrative right now because they produce what the frontier labs want. There are dozens of companies doing this.

- RL environments require a substantial amount of engineering work to scale at quality, but it's not the most technically challenging work. A lot of the work is:

- Calibration. Recall that you want these tasks to land in that middle band of difficulty. A lot of it is mucking with the tasks to calibrate their difficulty.

- Random data work and data cleaning.

- Automating as much as possible to reduce the expert contractors' time.

- To work in this space as an engineer, you don't need much ML knowledge. You need to be a good engineer and know how to use agents well.

- If you work on RL environments, it's easy to get a job at a frontier lab because you've worked on their input data. It is a logical next step to become one of the researchers training on them.

Footnotes

-

Part of being able to do economically valuable tasks is being able to literally control a computer. Computer use, browser use, and terminal use are 3 dimensions of that. There are benchmarks for each: Terminal-Bench for CLI tasks, and OSWorld for full GUI tasks where the model sees raw screen pixels. Browser use is typically based on Chrome. ↩

-

The standard benchmark here is SWE-Bench, a collection of hard software engineering tasks. ↩

-

For math, you can formalize correctness of some tasks in Lean, though a lot of practical setups use simpler graders. ↩