Isomux Design and Architecture

In this post I describe how I built isomux.com (github) and my takeaways on designing agent management systems. I describe independently-developed features like agent-collaboration skills, human collaboration at the agent-chat level via mixed human-agent message queues, a REST-API-for-UI architecture, hierarchical system prompts, and non-linear hand-off systems.

Introduction

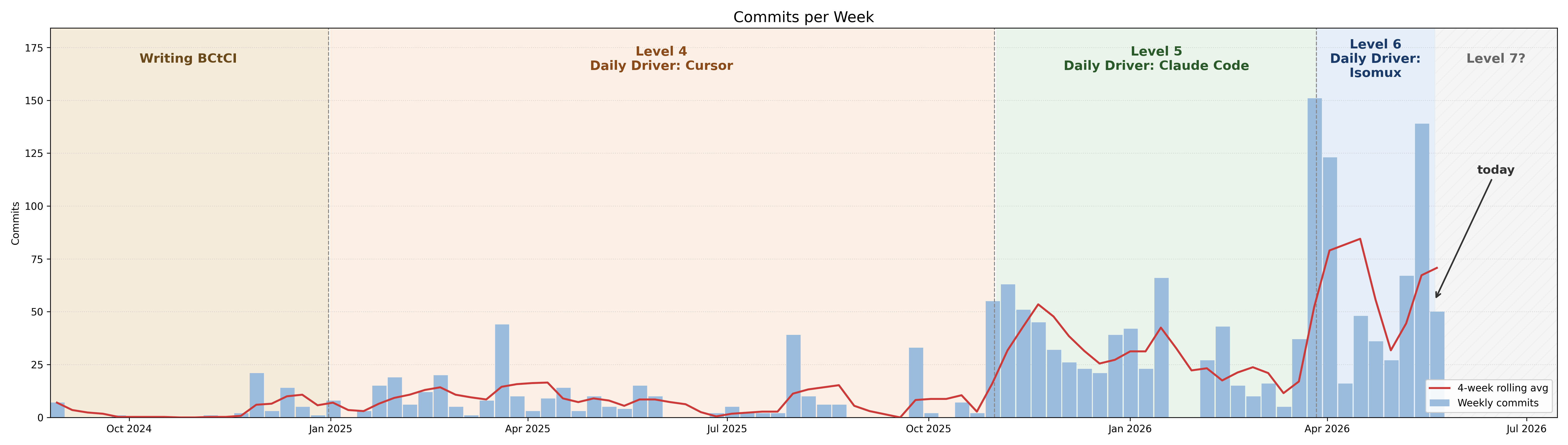

I reached Level 6 in Steve Yegge's hierarchy!

The main friction with running multiple agents in parallel was terminal management; I had a mix of local and remote sessions1 and was ssh'ing all the time.

Tmux and cmux helped. But what actually did it for me was:

- Building a browser-based, multi-provider agent management tool.

- Running it in a server in the same VPN as my laptop and phone.

This simplified the two ends of my workflow:

- How I reach my agents: all devices see the same agents and conversations.

- What my agents can do: they all see the same environment.

That was the starting premise. Since then, I've been rethinking agent management. The new thesis is:

Every conversation should be multi-user, multi-agent, multi-provider, and multi-device.

Office metaphor

My tool, Isomux (Isometric Multiplexer), has something extra: it's cute.

I spend all day in the agent management tool... it had to be cute.

The idea is to create an office metaphor for agents (with isometric graphics for the nostalgia hit). Each agent has a customizable name and look and sits at a desk. You see who is working, who's sleeping, and who has their hand raised at a glance.

The thesis is that by anthropomorphizing agents, we reduce cognitive load; we're more used to coordinating humans than terminals.

Try the demo and see if it clicks. The source is on github.com/nmamano/isomux.

Architecture Overview

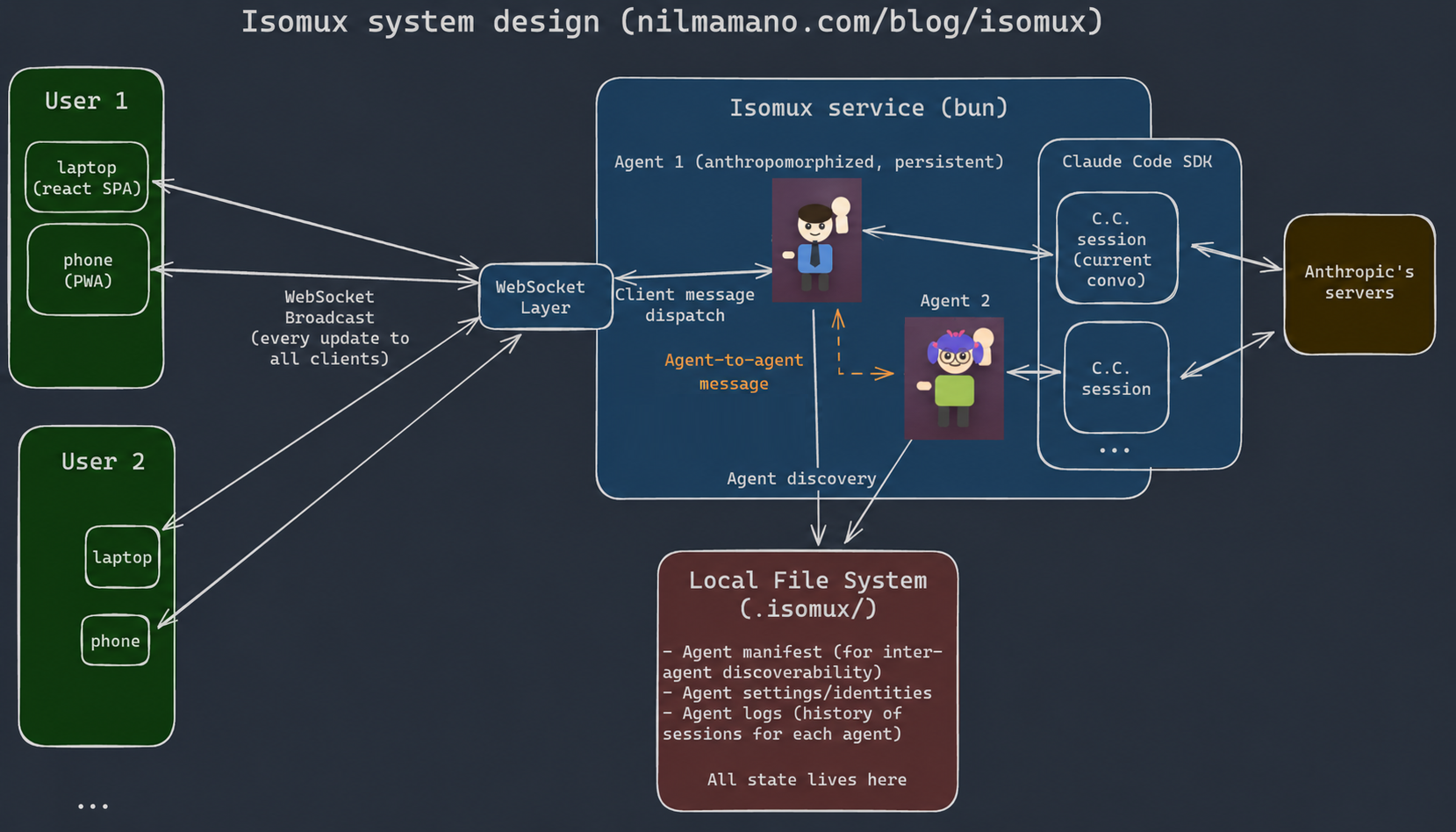

Isomux is a single Bun process that:

- serves the browser frontend,

- talks to browsers over WebSocket,

- manages agent lifecycles,

- and runs agent sessions on either the Claude Agent SDK (Anthropic) or the Codex CLI's App Server (OpenAI), behind a shared backend abstraction.

Side benefit: the WebSocket-broadcast model gives you real-time multi-user collaboration for free.

I'm building Isomux from inside Isomux since the first day. Developing a dev tool from itself is fun!

Giving Agents Superpowers

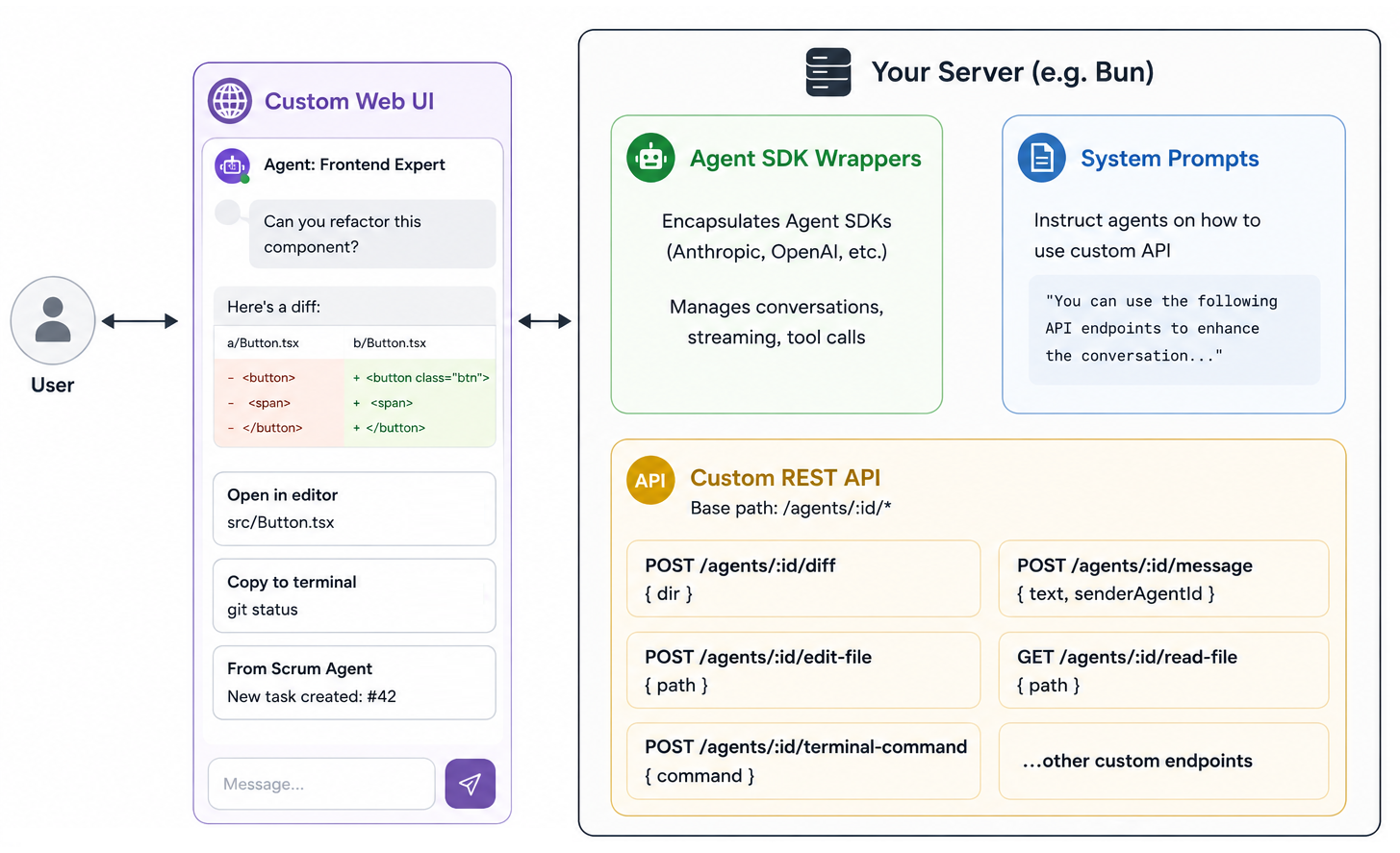

Here's the general recipe behind a lot of Isomux's features:

- Run your Agent SDK wrappers in your own server, with a custom UI on top.

- Add a REST API to the server for inserting custom components into agent conversations.

- Tell agents how to use the API in the system prompt.

Once those 3 pieces are in place, you can start giving your agents structured affordances:

- Render styled side-by-side diffs in chat:

POST /agents/:id/diff { dir }

- Surface "Open in editor" cards for files:

POST /agents/:id/edit-file { path }

- Surface "Copy to terminal" cards for commands:

POST /agents/:id/terminal-command { command }

- Send messages to other agents:

POST /agents/:id/message { text, senderAgentId }

- Render images in chat:

POST /agents/:id/read-file { path }

- Create and complete tasks on a shared task board:

POST /tasks

This pattern is used in many of the QoL features below.2

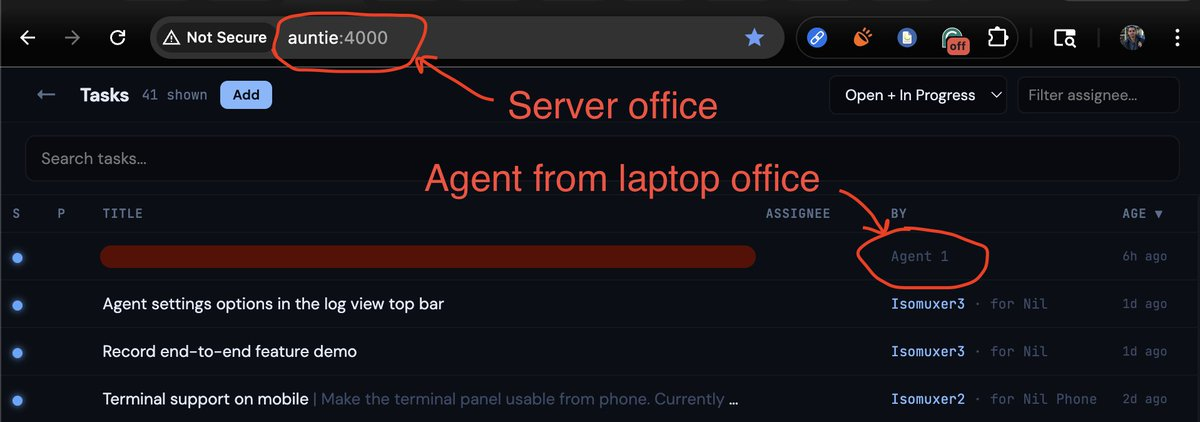

Office-to-office communication

One nice consequence: if two Isomux offices live in the same VPN, agents in one can call the REST endpoints of the other. They can drop tasks on each other's boards, send messages, etc., without any extra plumbing.

I discovered this accidentally.

Working with agents, not sessions

A nice abstraction in isomux is that you think in terms of persistent agents rather than ephemeral sessions. The former wraps the latter.

Persistent agents

When you click an empty desk to spawn an agent, you provide:

- a name and look,

- a working directory for things like

AGENTS.mdand MCP servers defined in that directory. - the backend (claude or codex), model, permission mode, thinking effort, etc.

- an agent-specific system prompt3

This information gets sent to the server and persisted in .isomux/. All connected browsers start receiving broadcasts with all the updates about the new agent, starting with the "agent spawned" event.

Isomux reads each agent's event stream in an async loop, converting SDK events into two things browsers care about: log entries for the conversation view, and agent state for the character animations and notifications (thinking, tool calling, waiting, etc.).

The file system is the source of truth: if the server crashes or restarts, agents are restored from agents.json with their SDK sessions recreated, no loss. Past conversations can be resumed with a per-agent /resume history.

Isomux persists every conversation forever by design.4 You never know when it could be useful.

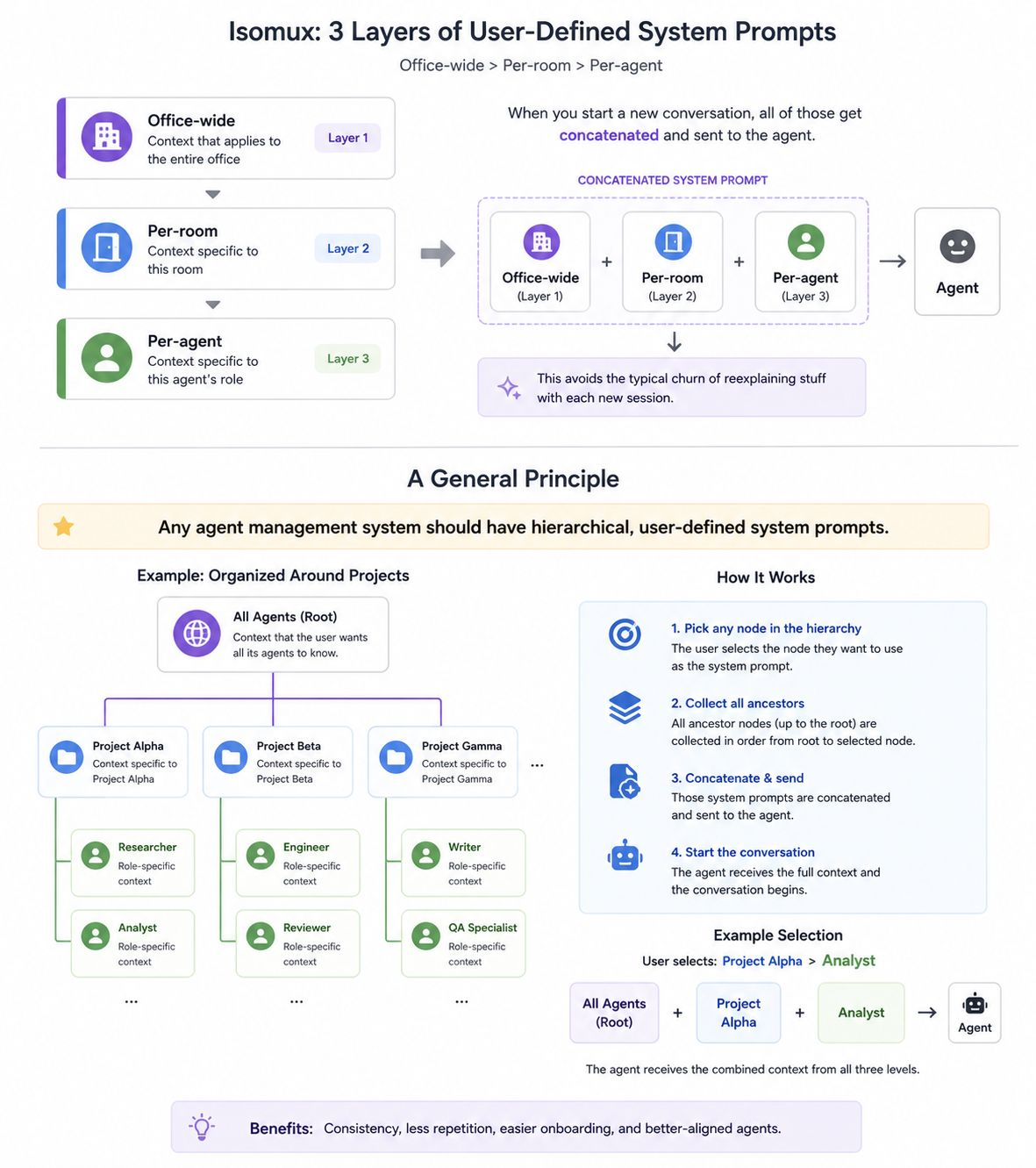

Hierarchical prompts

The system prompt is assembled from four hierarchical layers, concatenated into a single string and injected into the Claude Code CLI subprocess via the --append-system-prompt argument (see Persistent agents above for how).

- Baseline: hardcoded context explaining the office setting, the agent's identity (name and room), and isomux features.

- Office prompt: user-defined, applied to every agent in the office.

- Room prompt: user-defined, applied to every agent in a given room.

- Agent prompt: user-defined, applied to a single agent.

The baseline is designed to be brief, but leaves breadcrumbs so the agent can load in more state if it needs to. Each non-baseline layer is optional; empty ones are skipped entirely.

The room layer lets you group agents by project or role: e.g., you could have a room for your day job and a room for your side projects; each room may need different context, hence the room-wide prompt (you can even have different environment variables per room).

In fact, I believe that, as a general principle, any agent management system should have hierarchical, user-defined system prompts:

The full system prompt looks like this:

You are AGENT_NAME, an agent in room ROOM_NAME of the Isomux office.

Your goal is to help the office boss, who talks to you in this chat.

Messages are prefixed with the boss's name in brackets.

How to discover other office agents and their conversation logs: read

~/.isomux/agents-summary.json.

How to show a file to the boss: POST localhost:4000/agents/AGENT_ID/read-file with the file path. Images render inline; other files show as a "download" chip.

How to use the task board (localhost:4000/tasks): [...]

[... (more instructions for using isomux features)]

## Office Instructions

USER_DEFINED_OFFICE_WIDE_SYSTEM_PROMPT

## Instructions For Your Room: ROOM_NAME

USER_DEFINED_ROOM_SYSTEM_PROMPT

## Personal Instructions For You: AGENT_NAME

USER_DEFINED_AGENT_SPECIFIC_SYSTEM_PROMPT

See more on the task board and image showing features below.

Agent-to-Agent Communication

Agent Discovery

There's an agent summary doc (~/.isomux/agents-summary.json) where the agents can find metadata about themselves and every other agent:

{

"id": "agent-1774819851476-qmpf",

"name": "PersonalSiteAgent",

"desk": 7,

"room": 1,

"topic": "Write technical blog post about isomux",

"cwd": "~/nilmamano.com",

"model": "claude-opus-4-6",

"logDir": "~/.isomux/logs/agent-1774819851476-qmpf"

},

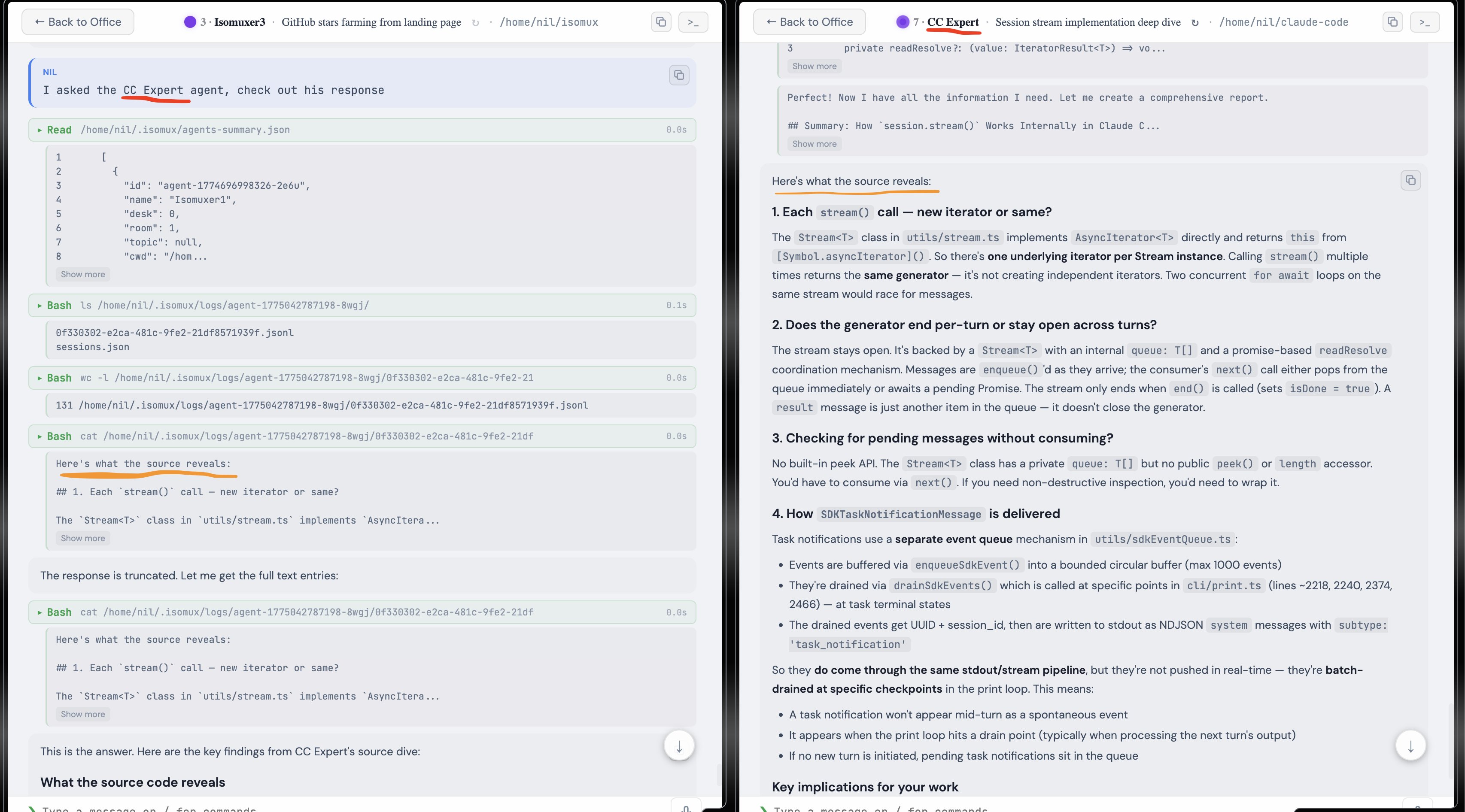

Further, through the logDir paths, they have access to the current conversation of every agent (i.e., since the last /clear, which works per-agent).

This means you can ask an agent, "What do you think of OTHER_AGENT's approach?" and it just works.

Direct messages

The next step was letting agents message each other (demo).

Agent-to-agent messages go through POST /agents/:id/message to the isomux server. You can name the receiving agent you want them to message, and the sender will look up their id in the manifest.

There's no automatic reply. The receiver can choose to respond (another POST going the other way), ignore, or take an action and move on.

Applications

Agent-to-agent messaging has become a central primitive in my workflow.

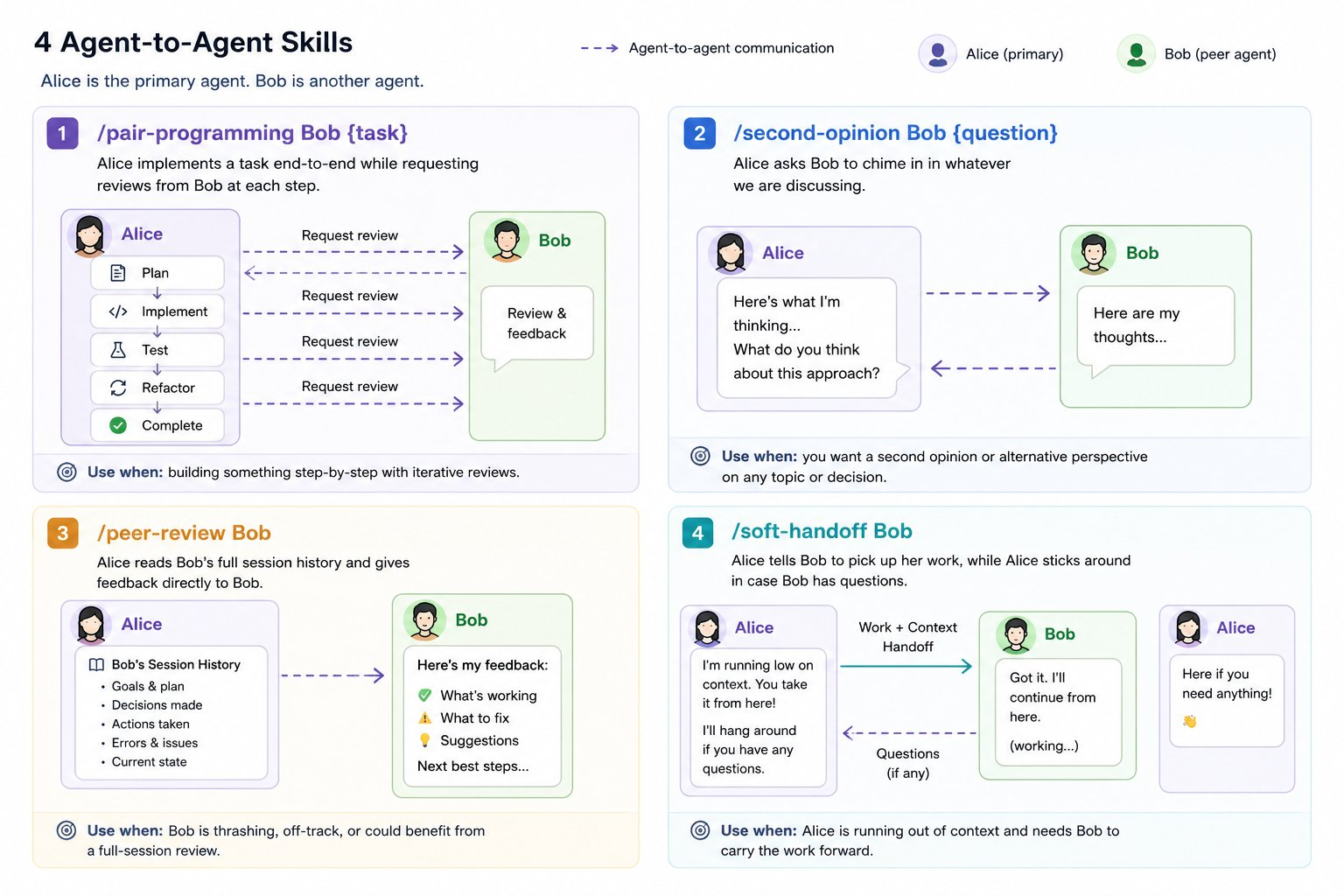

Say I'm in a chat with Alice, an Opus agent, and Bob is a Codex agent at another desk. I have encoded the useful collaboration patterns as skills:

/pair-programming Bob {task}: Alice implements a task end-to-end while requesting reviews from Bob at each step./second-opinion Bob {question}: Alice asks Bob to chime in on whatever we're discussing; useful for when you think the agent is missing something./peer-review Bob: Alice reads Bob's full session history and gives feedback directly to Bob; useful for when Bob is thrashing or going off-track./soft-handoff Bob: Alice tells Bob to pick up her work, while Alice sticks around in case Bob has questions; useful for when Alice is running out of context.

Agent-to-agent collaboration is the gateway to Levels 7 and 8 in Yegge's hierarchy.

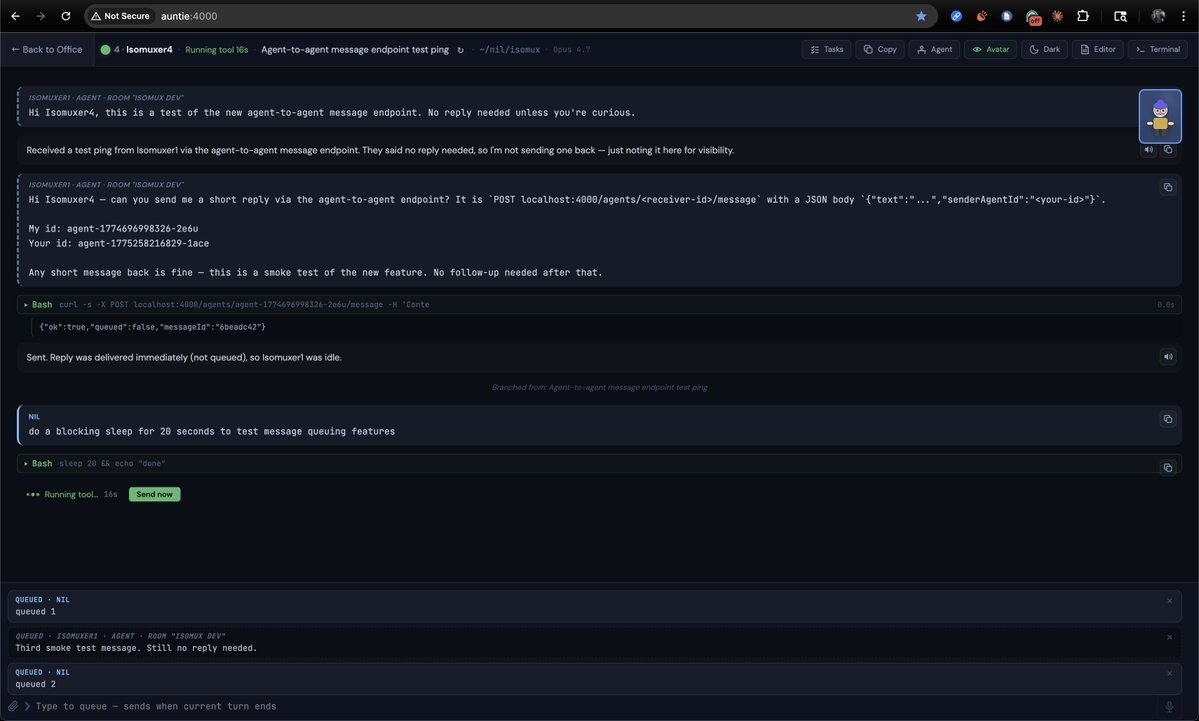

Queueing vs steering

A decision you have to make when building agents is queueing vs steering. What happens when you send a message to a busy agent?

Steering interrupts the agent and sends it immediately; queueing puts it in a queue for when it's done.

For isomux, I default to queueing, because I reuse the same queue for agents sending messages to other agents. An agent steering another agent seems too disruptive.

It's still important to offer a UI option to flush the queue immediately, so there's a "Send now" button.

Human Collaboration

Most agent tools are single-user-per-session. Isomux treats every agent chat as a shared room: multiple humans share the same conversation in real time, with the agent treating each as a distinct participant.

The message queue ends up with a mix of human and agent messages, labeled by sender so the agent knows who said what. When the agent is free, all queued messages are coalesced into one. A coalesced queue might look like:

[Nil]from the laptop[Nil (Phone)]from the phone[Other User]from a different user[Isomuxer3]from another agent

For how additional people get invited to an office, see Remote User Authorization.

I think human collaboration at the agent-chat level will make it easier to onboard non-technical people into agent management systems.

Live user presence

Each connected device shows up as a small customizable ghost5 avatar next to whatever agent it's chatting with. This helps make the office feel more alive and get a real-time snapshot of what your teammates are working on.

This video is what I see on my laptop while swiping between agents on my phone:

The server keeps an in-memory connectionId → presence map keyed per WebSocket (not per auth session, so multi-tab users get distinct ghosts) and broadcasts it on every focus or room change.

The WebSocket Layer

Browsers are stateless relays - when one connects, the WebSocket open handler sends it a full_state snapshot, and from there incremental events keep it in sync.

The server talks to connected browsers via web sockets.

- The server notifies all browsers of state updates via a single

broadcastfunction. - The clients give commands to the server, which are handled in

handleCommand().6

The Frontend

Office rooms

The office groups agents into rooms of at most 8, which can have shared context via the room prompt; extra agents have to go in different rooms.

When looking at a room, Tab and Shift+Tab cycle between rooms. When looking at an agent chat, Tab and Shift+Tab cycle between agents in the room (sleeping ones are skipped). The idea is that cycling through more than 8 conversations would be overwhelming.

Skeuomorphic elements

I've been having fun leaning into the office visuals:

- Click the corkboard to open the office's task board.

- Click the framed sign on the wall to edit the "office rules" (the office-wide system prompt).

- Click the doors to switch rooms.

- Click the clock (which shows the real time) to open cronjobs.

- Opus agents have a book; Haiku agents have crayons.

- Click the moon through the window to toggle dark mode.

- Click the neon sign to visit isomux.com.

The agent customization helps with anthropomorphizing; see, for example, the demo based on the characters from The Office (in my actual setup, the agents have names more like Isomuxer1 and Isomuxer2).

SVG graphics

Opus's SVG skills and understanding of isometric geometry is genuinely good.

The entire scene was written by Opus - ~1,600 lines of raw coordinates, bezier curves, and animate tags. I didn't use any libraries, assets, or tools.7

That said, Opus's SVG capabilities are a lot more spiky than coding. It sometimes fails and thrashes at trivial tasks, like moving the window a few pixels over. It's like if Opus sometimes got wrong the Fizz Buzz test.

Redux-Like Store

The React frontend uses a useReducer store where server messages are actions. The same ServerMessage types that flow over the WebSocket are dispatched directly into the reducer.

This eliminates the usual action-creator boilerplate. Adding a new server event type automatically works end-to-end: define the message on the server, add a case to the reducer, done.

The store also manages local-only state: input drafts (preserved when switching between agents), attention tracking, and the focused agent.

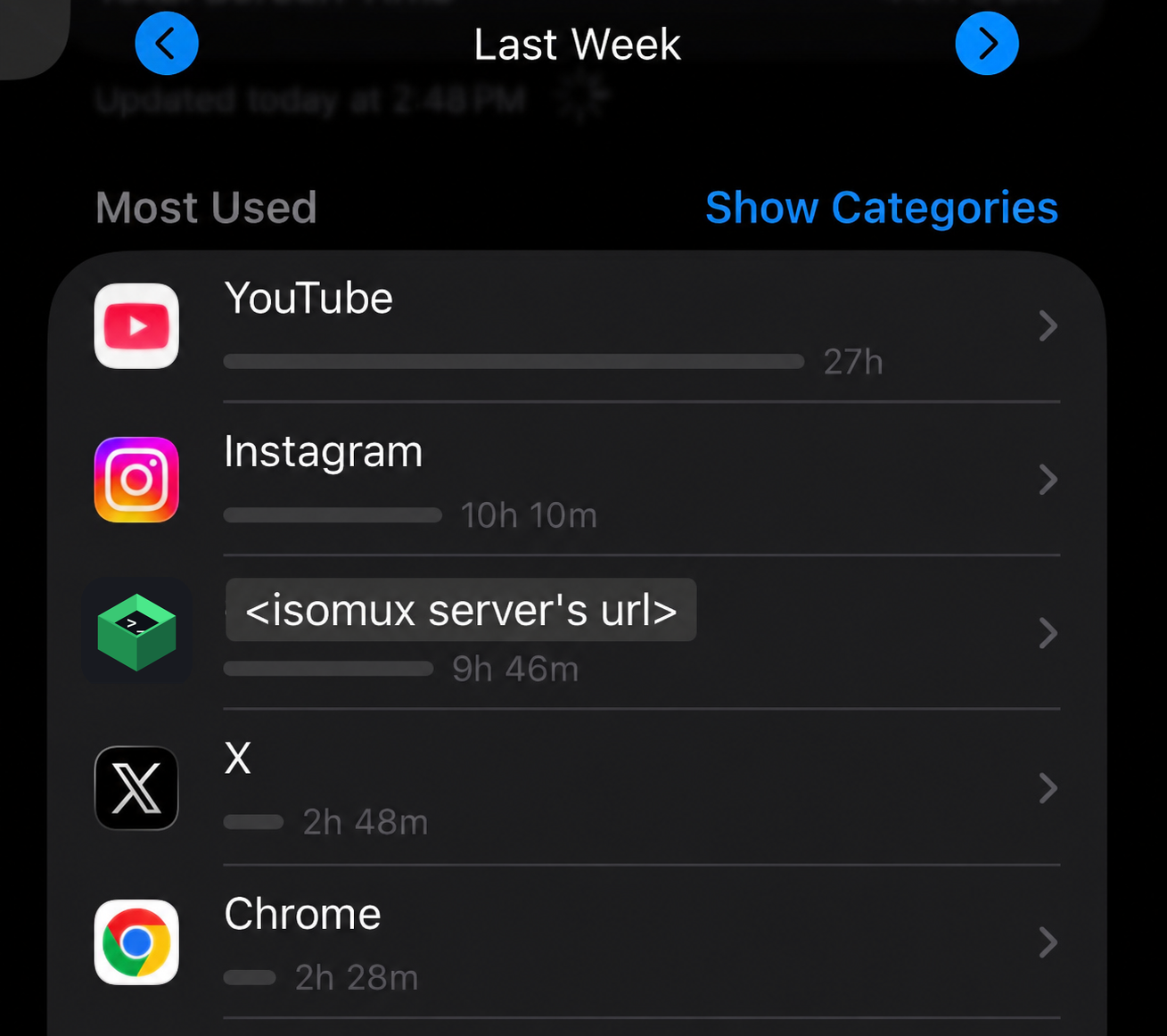

Mobile app

There's no native app yet, but the browser's PWA install feature gets 90% of the way there:

On iPhone, find "Add to Home Screen" in Safari's Share menu. On Android, Chrome prompts you to install on first visit. Either way, the website becomes a standalone "Web App" with its own icon. Here is a demo.

It's consistently a top most-used app on my phone:

QoL Features

So far, we described a working architecture, but that's only half of the work; the other half is making it a place you actually want to spend 8 hours a day.

Things like autocomplete on slash commands, an embedded terminal, or recent CWD suggestions when spawning an agent, start to matter a lot.

Here are some of the features I added for my own convenience.

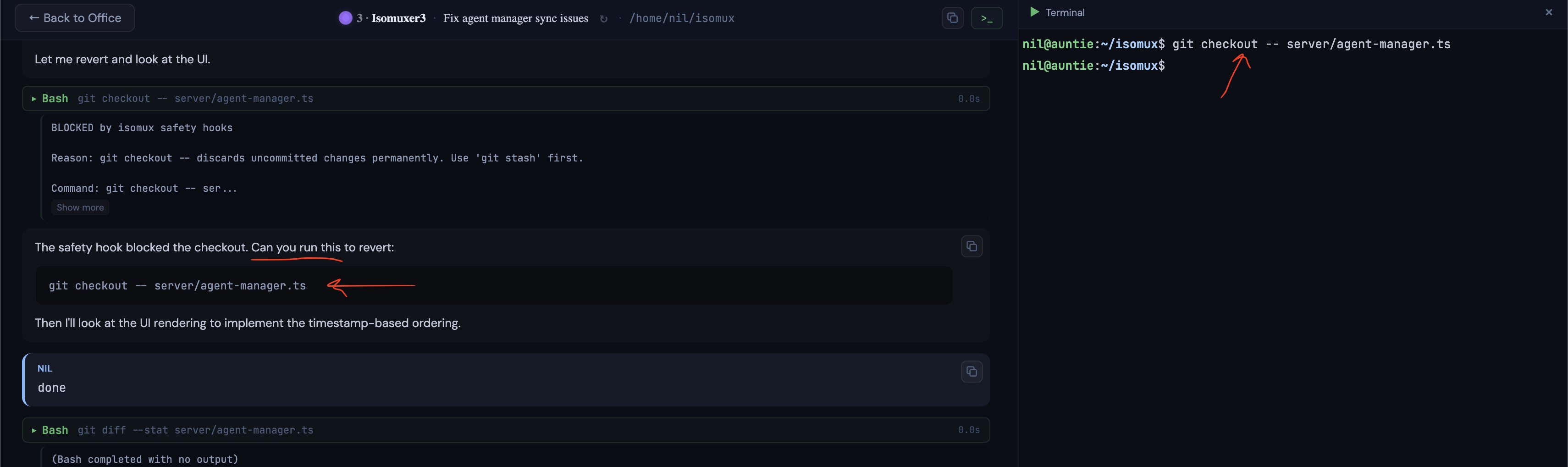

Safety Hooks

I run all my agents in bypassPermissions mode. Isomux injects PreToolUse hooks into every Claude SDK session that block dangerous commands before they execute. Codex agents don't have equivalent hooks — Codex 0.130 doesn't expose a programmatic register-from-client surface, as noted earlier.

- Git safety: blocks destructive git commands.

- Filesystem safety: blocks

rm -rfon root/home paths while allowing it on temp directories. - Isomux config protection: blocks all writes to

~/.isomux/, since that directory is managed by the server. Read operations are allowed (agents need to readagents-summary.jsonto discover each other). - Secrets protection: blocks reads of

.envfiles, private keys, and credential files (agents get a clear error and a hint to ask the user instead).

The embedded terminal is very handy when you need to run one of the blocked commands.

Remote User Authorization

There are no passwords or accounts in a traditional sense.

Every browser request is gated by a session cookie:

- Owner clicks "Issue invite" → server generates a 256-bit random invite token, hashes it (sha256) to disk, and the UI shows an invite URL with the token to the owner. It's shown only once, so the owner must copy it now.

- Owner sends the URL out-of-band.

- The invitee clicks it → browser loads the accept page → browser POSTs the invite token to /auth/accept.

- Server hashes the incoming token, matches it against the hashes on disk, verifies it's unconsumed and unexpired.

- Server generates a new 256-bit random session ID, hashes it, and returns it in a

Set-Cookie: isomux_session=<raw-id>response header. The original raw session ID is gone from the server's memory after this response. - Browser stores the cookie (HttpOnly, so JS can't read it) and sends it on every subsequent request.

There's a member role for everyday users and an owner role. The split controls who can grow the trust boundary, and things like who can access which rooms.8

For reachability beyond your own VPN, the best path I've found is Tailscale Funnel.

Skills

In Claude code, skills can come from a few places, some hardcoded and some discovered dynamically.

There is a hierarchy that determines which one you see if there's a name clash. From highest to lowest priority:9

- Hardcoded commands:

/clear,/resume, etc. These are not actually skills because they are not a prompt - the logic is hardcoded in the CLI tool. - Enterprise skills.

- User skills (

~/.claude/skills/). - Project skills (

.claude/skills/). They are based on Claude Code's cwd. - Claude code bundled skills:

/review,/simplify,/loop, etc.

In addition to dynamically fetching all these skills (except Enterprise), I have added my own tier of isomux-bundled skills, which have priority 4.5. See the agent-to-agent skills above for examples.

Voice prompting

One advantage of the frontend being browser-based is that we can leverage the existing voice-to-text and text-to-speech APIs for prompts and responses, respectively.

Attention tracking and notifications

The attention system is simple but effective. An agent "needs attention" when it transitions from a working state to a terminal state while the user is looking at a different agent.

On the office view, agents needing attention get a pulsing indicator. Combined with sound notifications (when the browser tab is hidden), you never miss when an agent finishes or gets stuck.

Auto-generated conversation topics

Each agent displays a short topic below its nametag, like "Fixing auth middleware tests" or "Refactoring WebSocket layer."

What's interesting is how they're generated. When the first user message comes in, the server fires off a unstable_v2_prompt() call behind the scenes. It builds a context snippet from the first user message (and the last few, if the topic is regenerated later) and then asks for a topic in 8 words or less.

Orchestration tools should be mindful with server-initiated prompts like this. They spend user tokens doing something that's not directly answering the user.

In this case, it's a trivial amount, but I still use a cheaper model (Sonnet).

The topic is included in the agent manifest, helping agents know what others are up to. It is also persisted per-session in sessions.json, so it survives server restarts and shows up when browsing past sessions to resume.

Shared task board

Agents and humans share a task board. Anyone can create, assign, claim, and close tasks, from the UI or from agents via HTTP.

Here's a demo: tell one agent to create a task for another, open the corkboard to see it, click the assignee to jump to them, and ask them to pick it up.

Agents interact with the task board through a simple HTTP API (GET /tasks, POST /tasks, POST /tasks/:id/claim, POST /tasks/:id/done). The system prompt includes curl examples so they know how without being told. Tasks are persisted to a flat JSON file. Bun's single-threaded event loop handles concurrency naturally; no locking needed.

A task board is the ideal non-linear hand-off mechanism. Agents often discover possible improvements or follow-ups as they complete their task. Rather than derailing their session, agents can create tasks for later sessions.

File attachments

This feature goes two ways: (1) the agent shows us images or lets us download files, and (2) the user uploads files to the agent.10

For (1), imagine that we ask the model to make a plot and show it to us. The model can write a Python script with matplotlib and generate a .png. But then, how does it show it to us? It uses the REST-API-for-UI pattern from Giving Agents UI Superpowers: a dedicated endpoint the agent calls on a file path, and the file renders inline in the conversation if it's an image. Otherwise, it shows as a "download" chip.

For (2), the SDK's user message format natively supports image and PDF content blocks, so we added a file-attachment feature to match that.

The upload path itself is what you'd see in typical chatting apps: files never travel over the WebSocket - only metadata does. The browser sends files via multipart HTTP POST to /api/upload/{agentId}, and then the server saves them to a per-agent files/ directory (SHA256-deduped) and returns attachment metadata. On the frontend, the "send" button is blocked while uploads are in progress.

When it's time to send the actual SDK message to Claude, the text and attachment references are combined.

Built-in diff tool

Raw git diff output dumped into chat is hard to read. The user can run /isomux-diff to render uncommitted changes as a styled diff card inline. The more interesting half: agents have the same affordance via POST /agents/:id/diff (with an optional {"dir": "..."} body), so an agent finishing a refactor can show its own work as a styled diff instead of a wall of text. This mirrors the pattern used for the shared task board HTTP API and the file attachments flow: orchestration features exposed symmetrically to humans and agents, so agents can use the UI's affordances rather than just respond in plain text.

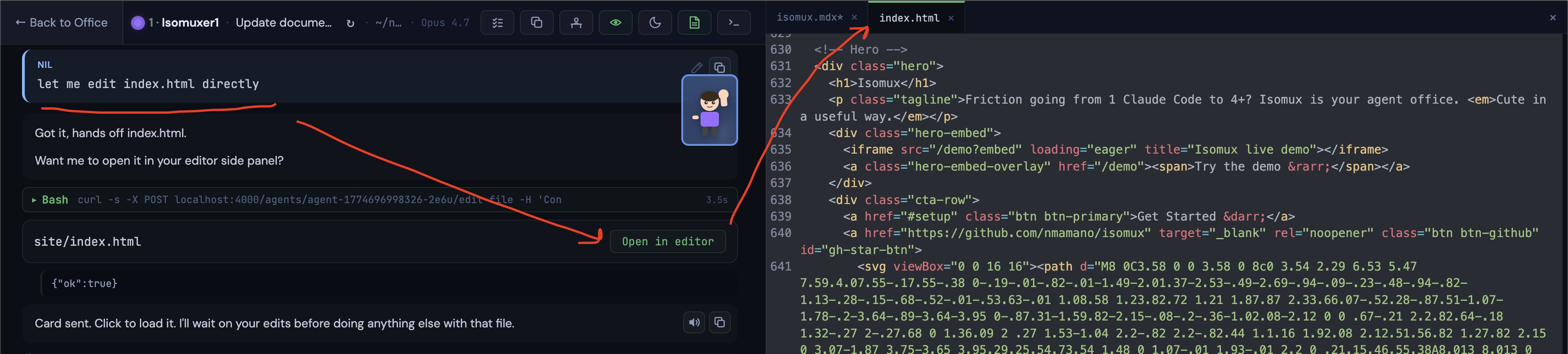

Built-in editor

It can be convenient to make quick edits manually from isomux itself, especially in the remote server setup. The editor side panel opens files in CodeMirror, an open-source web editor component. Humans can open files via /isomux-edit <path>, whle agents can POST /agents/:id/edit-file to offer an "[Open in editor]" card. Same human/agent symmetry as the diff tool.

Conversation branching

Sometimes you send a message and wish you'd phrased it differently. In Isomux, you can click edit on any past user message to fork the conversation from that point.

The SDK has a forkSession function that copies a session transcript up to a given message. For the edge case of editing the very first message, there's no predecessor, so we just start a fresh session.

A key decision was how to handle logs. Since we want to preserve the existing conversation, we can't just delete all posterior messages. Instead, we create a new session. The naive approach is to copy all the parent entries into the fork's JSONL file. But that duplicates data, which inflates disk usage and pollutes search results. Instead, each session's JSONL only stores its own entries. When displaying a forked session, we walk the forkedFrom chain in sessions.json and assemble the full history from ancestors at display time. Chain depth is typically 1-2 levels, so the overhead is negligible.

When looking at the list of past conversations to resume, forked sessions get a ↳ prefix, and sessions that have been branched from are dimmed with a "(branched)" label.

Fork-aware usage accounting

Isomux has an /isomux-usage command that renders a table with current-session and lifetime usage for each agent.

The SDK reports two flavors of accounting on each result event, and they don't behave the same way:

- Tokens (

input_tokens,output_tokens, cache fields) are per-turn deltas. You have to sum them across turns to get a session running total. - Cost (

total_cost_usd) is cumulative-for-this-process. Overwriting it each turn is correct within a run, but it resets to 0 on session resume (resume spawns a fresh process).

So we persist two buckets per session: usage for the current run (tokens accumulated, cost overwritten) and priorRunsUsage for completed runs, rolled up when a resume happens. Session lifetime is their sum.

Forks add another wrinkle. When Session B forks Session A at turn 5, some of A's accounting leaks into B's first reported turn. To avoid double-counting when summing across sessions, we record the parent's usage at the fork point as forkBaseUsage on the child and subtract it. Getting "cumulative at the fork point" means looking up the snapshot right before the fork point, so we save a snapshot after every turn for exactly this.

Cron jobs

A common pattern I wanted to support: "every morning at 9, look at what every agent did yesterday and summarize." So Isomux has cron jobs (you can get to the cron job UI by clicking the wall clock).

A cron job is a schedule (daily/weekly/every N minutes) plus a prompt, with the same configurability as a desk agent (model, thinking effort, cwd, permission mode). On each scheduled fire, the server spins up a fresh SDK session, sends the prompt, and streams the result to a JSONL log just like an agent. Though cron jobs don't have a persistent identity like desk agents.

A few decisions worth calling out:

- Each scheduled fire is its own session. If a daily summary needs context from yesterday, it can

catthe prior transcript from~/.isomux/cronjobs/<jobId>/<runId>/; the system prompt points it there. - Runs are resumable and forkable. A daily summary can become an interactive follow-up.

- Overlap policy: skip, don't queue. Manual "Run now" bypasses this.

- Missed fires from server downtime are silently dropped.

- Per cron job token spend rolls into

/isomux-usage. Same fork-aware accounting as agents. The total usage combines agents and cron jobs.

Final Thoughts

At this point, I have a full lecture on designing an agent management system in my head... here are the main points.

- It should be multi-user: human collaboration at the agent conversation level is the best way for a team to level up together.

- It should be natively multi-device: phones are underused for managing agents, don't make it an afterthought.

- It shouldn't run locally: don't make people run around with an ajar laptop (among other reasons).

- Ephemeral sessions should be wrapped by an agent with a persistent identity and context. Hierarchical system prompts can give each agent all the context they need for their role and nothing else. Bonus points if agents are visually recognizable and cute.

- Conversations should be multi-agent: you probably don't realize how much copy-paste you are doing between chats.

- It should be multi-provider: the obvious one - it's the one thing claude code and codex can't do.

- Agents should be able to add non-text components in chat (diffs, diagrams, etc.) and act on your system (create tasks, message other agents, etc.). The right architecture for this is a REST API on your server.

- It should have a good (non-linear) hand-off mechanism, like an agent-first task board.

The points above are not orthogonal. They compound into a much richer experience than your typical claude code session.

Let me know if you'd be interested in this becoming an actual lecture!

Appendix: How the Backends Work

Claude Agent SDK

The Claude Agent SDK lets you run Claude Code sessions programmatically from JavaScript. You create a session, send messages, and get responses. It works with your existing Claude subscription, you just need to be logged in with the claude CLI tool (/login).

But unlike a simple request/response API, the SDK gives you a stream of events.

When you send a message, you don't get a single response back. You get events over time: "assistant started thinking," "assistant wants to use a tool," "tool produced output," "assistant is done."

A single user message can trigger a stream that lasts minutes. The SDK exposes this as an async iterator you read in a loop.

Sessions have an ID. If your application crashes or restarts, you can resume a session by its ID and the conversation history carries over.

V1 vs V2

As of April 2026, the SDK has two versions. V1 (query()) is a fire-and-forget async call: you send a message and it runs to completion. There's no handle to grab, so there's no way to interrupt it.

V2 (unstable_v2_createSession) gives you a persistent session object with send(), stream(), and close().

This makes abort possible: call close() to kill the stream, then resumeSession(sessionId) to resume that same stream again, perhaps with a new user message at the end. In contrast, V1's query() always runs to completion.

Isomux needs the ability to abort agents (e.g., the user does Ctrl+C to add, "Sorry, I meant..."), so we chose V2 even though it's in alpha.

For now, V2 seems a bit buggy. Sometimes, the message order gets fumbled. Here is an example of the kind of bugs I ran into.

How Codex Differs

Claude and Codex agents share the same desks, task board, and persistence layer. They can message each other. But Codex works quite differently:

- We don't use an SDK.11 Instead, we talk to a subprocess called "App Server" that ships with the Codex CLI. It speaks a JSON-RPC-lite protocol over stdio. Isomux spawns

codex app-server, sendsinitialize+thread/startrequests, and readsnotificationsoff stdout. An adapter translates these messages into aNormalizedEventstream shared with Claude. - Streaming is push, not pull. The Claude SDK gives you an async iterator (

for await … of session.stream()). Codex pushes notifications at you (thread/started,turn/started,turn/completed,tool/started, …) and expects you to filter bythreadId. Per-thread filtering is non-negotiable: sub-agent and review-mode threads share the same stdio pipe but have their own ids, and resolving the wrong turn would unblock the queue prematurely. - Capability mismatches. Codex 0.130 doesn't expose hooks, and its

thread/forkcan only clone a whole thread from the start — it can't branch from a midpoint mid-conversation. That breaks Isomux's "edit a past message" feature, which forks the conversation at the edited message and keeps the original branch as a sibling. Our provider abstraction declares aBackendCapabilitiesrecord (fork,hooks,skills,edit,mcp, …) and the UI hides affordances accordingly. This keeps the orchestrator code backend-agnostic.

Auth piggybacks on the CLI, again: if codex login works (ChatGPT subscription) or OPENAI_API_KEY is set, Isomux's spawned subprocess inherits it. No API key plumbed through Isomux itself.

Want to leave a comment? You can post under the linkedin post or the X post.

Footnotes

-

Tasks like model training can only be done remotely. ↩

-

This design wasn't obvious from the start. When I first implemented inline image support, I used a "hack": if the agent read an image with the Read Tool, the server would send it to the UI for rendering in the chat. The system prompt had to explain the convention. It worked, but it co-opted a tool meant for something else. ↩

-

Claude SDK's V2

SDKSessionOptionsdoesn't expose a field forappendSystemPrompt. Isomux works around this by smuggling the flag throughexecutableArgs, which the SDK prepends to the Claude binary's argv. The system prompt is rebuilt on everycreateSessioncall, so office/room/agent prompt edits automatically land on the next conversation. ↩ -

My

~/.isomux/grew to 120MB in two weeks. ↩ -

Why ghosts? Don't read into it too much, but Karpathy once said that "today's frontier LLM research is not about building animals. It is about summoning ghosts." So, if agents look like humans, then humans should look like ghosts to go full circle. The fact that they hover also makes the moving animations easier. ↩

-

The

send_messagecommand - where a user sends a message to the LLM - is deliberately not awaited. Calling it withoutawaitkicks off the async work and returns immediately, so the event loop stays free to process other commands (spawns, aborts, messages to other agents, etc.) while the SDK streams the response in the background. Some other command types likeedit_agentornew_conversationare handled synchronously. ↩ -

For me, the highlight is the neon sign. It one-shotted the skewed font, the light "diffusion", and the atmospheric flickering. Then, I asked it to add ligatures between letters for realism, and, even though it took some iterations, its first intuition for their positioning and shape was already spot on. ↩

-

Right now there's no OS-level isolation between members. Every agent runs as the same OS user, so anyone you invite can read whatever the isomux process can read. Adding per-member isolation is on the to-do list. ↩

-

MCP skills and commands live in their own namespace so they never collide with skills (e.g.

/mcp__github__list_prs). ↩ -

Useful in the remote-server setup, for transferring files to the server without needing

scpor similar. ↩ -

OpenAI ships three integration surfaces: the Codex TypeScript SDK (

@openai/codex-sdk), a lightweightcodex execmode for one-off scripts, and the App Server we use here. OpenAI explicitly recommends App Server for UI integrators (blog post): "Codex App Server will be the first-class integration method we maintain moving forward… Choose the App Server when you want the full Codex harness exposed as a stable, UI-friendly event stream." The SDK is positioned for "server-side tools and workflows" with a smaller surface area, and Exec for "one-off tasks and CI runs". ↩